One of the first questions we get when introducing our novel Agent-to-Agent (A2A) approach for the insurance market is simple: “What about security?”

More specifically:

What about prompt injection?

What about data exfiltration?

What about unauthorised access between agents?

These are not theoretical concerns. They are real, well-documented failure modes of modern Agentic AI systems. And are often misunderstood.

This post outlines our honest assessment of those risks, where they materialise for us, and why the AgLabs approach is deliberately designed to contain them.

The new threat model: from software bugs to behavioural exploits

Traditional software security is about controlling what code can do. Agentic AI systems introduce something different: they can be manipulated through what they are told.

Two ideas from recent research (well articulated by Simon Willison in his blog) are particularly useful here:

1. The “lethal trifecta”

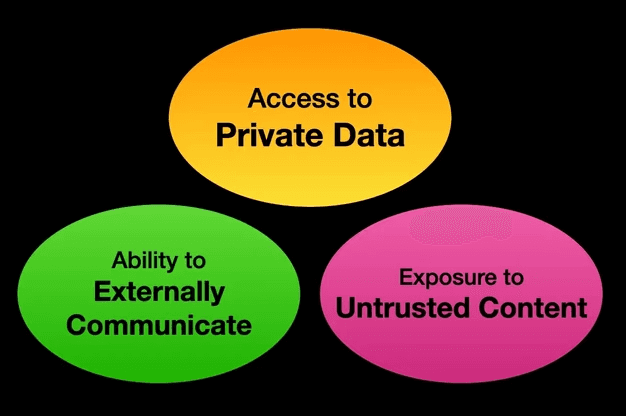

Agentic AI systems become dangerous when they combine three elements:

Access to sensitive data

Ability to externally communicate

Exposure to external input (untrusted)

That combination creates the conditions for data exfiltration or unintended actions.

2. The “rule of two”

Recent work by Meta highlights that If a system connects any two of the above, we should assume some risk.

If it connects all three, we should assume serious consequences are possible.

This framing is helpful because it shifts the conversation from “can prompt injection happen?” (it can)

to: “what can it actually do if it does?”

In what follows, we’ll show why in the AgLabs A2A model the answer is: not much, mainly because our A2A approach constrains how these capabilities can interact.

Where most Agentic AI systems can go wrong

Most current AI Agentic applications are built like this:

Capability | Typical setup |

|---|---|

Data access | Broad internal datasets |

Actions | API calls, workflows, automation |

Inputs | Emails, documents, user prompts |

This creates a classic lethal trifecta: sensitive data, external action capability , untrusted input.

In that setup, a prompt injection is not just possible, it is meaningful. An attacker could:

Extract data they shouldn’t see

Trigger actions they shouldn’t control

Move laterally across systems

Our A2A approach changes the problem entirely

In our AgLabs Agent-to-Agent workflow, the purpose of the system is explicit: exchange information between known counterparties until a risk is decision-ready.

That changes the security model fundamentally.

Let’s walk through a typical interaction:

A party (eg broker agent) sends a submission

Another party (eg underwriter agent) receives it

The underwriter agent asks for clarifications

The broker agent responds

The loop continues until the risk is ready

This is not a system trying to protect data from the counterparty.

It is a system explicitly designed to share data with that counterparty.

It’s also worth recognising a practical reality of today’s market: often market participants already overexpose information by default. For instance, in order to avoid repeated back-and-forth with multiple underwriters, brokers routinely share more data than any single counterparty strictly requires.

The key point is not that A2A results in more data being shared than today’s email-based approach (in practice, both involve ‘overexposure’).

The difference is in how that overexposure is handled: in our A2A design, data sharing becomes explicit, scoped, and auditable, rather than implicit and uncontrolled.

What is currently implicit and uncontrolled becomes programmable and governed.

So what happens if prompt injection occurs?

Let’s assume the worst case: a malicious individual or compromised agent attempts to inject prompts into the other agent during an A2A exchange.

What can it actually achieve? The answer is simple: it can 'exfiltrate' information that the other party was already willing to share, and nothing more. Why? Because of how access is defined: the boundary of exposure is defined upfront, and enforced by design.

Also, it is important to note that in our approach, A2A is not where decisions are made:

Agents handle coordination, clarification, and data exchange

Decision-making sits outside the A2A loop (within deterministic rule systems, and humans reviewing and making the final call)

This means that even if an agent’s behaviour is influenced during an interaction, It cannot directly trigger binding decisions or actions.

Which further limits the practical impact of prompt injection.

Security is defined at the boundary, not inside the model

In the AgLabs architecture, agents do not have open-ended access to internal systems.

They operate within explicit, pre-defined data scopes.

Before any interaction begins:

Each organisation defines what data can be shared

For a given submission or business line, a bounded dataset is exposed

The agent can only operate within that dataset

This means:

There is no ‘hidden’ database to exfiltrate

There is no broader system access to escalate into

There is no “surprise” data beyond what was already in scope

In other words, even if an agent is manipulated, it cannot exceed the permissions of the interaction.

The real control point: who is allowed to talk to your agent

This leads to the most important security principle our A2A approach: security is primarily about connection control, not prompt control.

Control layer | What it governs |

|---|---|

Identity | Who the counterparty is |

Permissions | What they are allowed to do |

Scope | What data is exposed |

Context | When and why interaction is allowed |

At AgLabs, this is handled through granular, permissioned connectivity:

Agents only communicate with approved counterparties

Permissions can be scoped by:

Organisation

Team

Line of business

Active commercial relationships (e.g. TOBAs)

All communication is authenticated and authorised (tokens, credentials, etc.)

If an agent is not explicitly trusted: it cannot connect. Full stop.

What about unauthorised access?

The question then becomes familiar:

“What if someone gains access to an agent they shouldn’t have access to?”

At that point, we are no longer in “Agentic AI risk”.

We are in well-known security territory:

Risk | Equivalent in traditional systems |

|---|---|

Agent impersonation | Stolen API credentials |

Data access abuse | Database breach |

Unauthorised calls | API misuse |

Mitigations are well understood:

Strong authentication

Short-lived credentials

Audit logs

Monitoring and anomaly detection

In other words, the risk is not new. The interface is.

Why the lethal trifecta doesn’t apply (in the same way)

Element | Traditional AI systems | AgLabs A2A model |

|---|---|---|

Sensitive data | Broad, often implicit access | Explicit, scoped per interaction |

External communication | Open-ended (APIs, web, plugins..) | Only with authorised counterparties |

Untrusted input | Open-ended (users, web, files) | Restricted to authorised agents |

The issue is not whether the three elements exist - they do!

The issue is whether they can be combined in a way that allows an attacker to extract new information or trigger unintended behaviour.

In the AgLabs A2A model:

Inputs only come from authorised counterparties

The agent only has access to pre-approved, scoped data for that interaction

External communication is limited to those same counterparties

Therefore, there is no path to extract data beyond what was already intentionally shared within that relationship.

So even if a prompt injection occurs: it cannot expand access, escalate privileges, or reach new destinations.

Which means the “lethal trifecta” is present in theory, but constrained in practice to the point where it cannot be exploited in a meaningful way.

Designing for inevitability, not prevention

Most AI security discussions assume: “We must prevent prompt injection.”

In practice, that’s unrealistic, because prompt injection is:

Cheap

Easy

Almost inevitable

So the correct approach should be instead : “Assume it will happen, and design so it doesn’t matter”!

That is the core of the AgLabs approach.

A familiar analogy: email already has this problem

Today’s insurance market already runs on:

Email attachments

Free text

External inputs

Unknown formatting

This is effectively an unstructured, unauthenticated prompt injection surface — at scale

Now compare that to A2A:

Email world | A2A world |

|---|---|

Anyone can initiate contact | Only authorised agents can connect |

Identity is weakly verified | Identity is cryptographically enforced |

Data sharing is discretionary | Data sharing is pre-scoped and controlled |

Detection is human-led | Enforcement is system-level |

What this means in practice

For any market participants:

You decide who your agent can talk to

You decide what data is shared

You retain full control of decisions

Every interaction is traceable and auditable

And most importantly: the system cannot leak what it was never able to access in the first place.

Final thoughts

The three elements of the “lethal trifecta” are present in an our A2A approach. Our agents handle data, can communicate externally, and interact with inputs beyond direct organisational control.

So the question is not whether the risk exists in theory.

It is whether the system allows that risk to be exploited in practice.

In our permissioned A2A model, it does not:

Data is explicitly scoped to each interaction

Counterparties are explicitly authorised

Communication is restricted to those same parties

There is no path to expand access, reach new systems, or extract information beyond what was already intentionally shared.

That is the key distinction of the AgLabs approach., which is not about preventing prompt injection. It is about designing a system where, even if it happens, nothing meaningful can go wrong.

This is what enables a secure, scalable Agent-to-Agent insurance market — by design, not by patching.